AI Agents Are Becoming Critical Infrastructure. Stop Renting Them.

Executive summary

The release of OpenAI Frontier is a strong signal of where enterprise AI is heading: away from simple “chat with a model” use cases and toward AI coworkers—agentic systems capable of taking actions across real business systems, operating under policy, and improving over time. This evolution is inevitable. What remains an open question is how organizations should build and control these capabilities.

At first glance, proprietary platforms promise a reassuring end‑to‑end story: unified interfaces, prepackaged agents, built‑in execution, and evaluation loops. Yet beneath the polish, the underlying building blocks are well understood. They are architectural patterns, not proprietary magic.

From my perspective as Co‑CEO of Polynom, a consulting firm specialized in AI and large‑scale data systems, most enterprises are better served by starting from a modular, open‑source architecture. Such an approach reproduces the same layered design as platforms like Frontier—interfaces, agents, execution, shared context, and evaluation—while offering materially stronger control over sovereignty, governance, costs, and long‑term optionality.

This is not a purely technical debate. In the current geopolitical context—where Europe must position itself between US‑based hyperscalers and rapidly advancing Chinese ecosystems—the ability to retain control over critical AI infrastructure has become a strategic concern. The emergence of Frontier makes the question unavoidable: do we want our AI coworkers to be black boxes we rent, or systems we truly own and govern?

What an enterprise agent platform must deliver—regardless of vendor

Whether proprietary or open, any serious enterprise agent platform rests on the same non‑negotiable foundations.

1. A shared business context

Agents are only as useful as the context they can reliably access. This means:

- Connecting siloed data sources, documents, and operational systems.

- Exposing a semantic layer so agents understand business entities, KPIs, and workflows rather than raw tables or files.

- Enforcing access control and data permissions consistently across every interaction.

Without a shared and governed context, agents quickly degrade into brittle prompt assemblies that cannot scale beyond isolated demos.

2. A robust agent execution environment

Enterprise agents must be able to plan, act, recover from errors, and interact safely with tools. This requires:

- A reliable runtime for agent reasoning and orchestration.

- Controlled execution of code, file operations, and API calls.

- Deployment flexibility across on‑premise infrastructure, private cloud, and public cloud environments.

3. Continuous evaluation and optimization loops

Trust in AI coworkers is built through repeatability and measurement. A production platform therefore needs:

- Automated tests and regressions for agent behavior.

- Deep observability into traces, latency, failures, and tool usage.

- Feedback loops that allow improvement without destabilizing production systems.

On top of these foundations sit user interfaces—chat, workflows, embedded copilots—and a cross‑cutting governance layer covering identity, policy, auditing, and compliance. The rest is implementation detail.

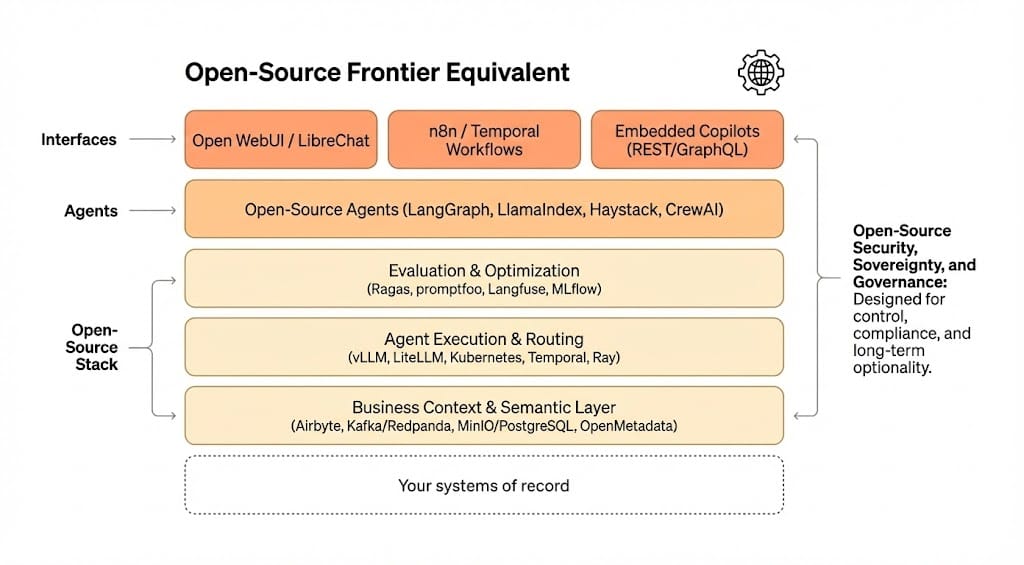

A layered, open‑source alternative to Frontier

An open‑source equivalent to Frontier is best understood as a layered architecture, where each concern is cleanly separated and independently evolvable.

Layer 1 — Interfaces: where work meets agents

The objective of the interface layer is simple: make AI coworkers available through the tools people already use, without locking agent logic to a single UI.

In practice, this typically includes:

- Self‑hosted chat interfaces, such as Open WebUI or LibreChat, offering a ChatGPT‑like experience with multi‑model routing and retrieval‑augmented generation.

- Workflow automation and orchestration, using tools like n8n for business automation or Temporal for durable, long‑running enterprise processes.

- Embedded copilots inside business applications, exposed through REST or GraphQL APIs and integrated via event‑driven mechanisms.

A key best practice is interface independence: the same agents should be callable from a chat UI, a workflow engine, or an application copilot without duplication.

Layer 2 — Agents: logic, roles, and collaboration

Agent logic should be decoupled from execution and infrastructure concerns. This separation is essential for maintainability and reuse.

Open‑source frameworks such as LangGraph, LlamaIndex, Haystack, or CrewAI provide complementary patterns for building stateful, multi‑step, and multi‑agent systems. In enterprise settings, we typically recommend standardizing on a primary framework—often LangGraph—for production agents, while maintaining a shared library of reusable tools (CRM access, ticket creation, reporting, knowledge search).

Clear conventions around versioning, testing, and promotion pipelines are what turn agents from experiments into dependable system components.

Layer 3 — Business context: the semantic backbone

In real deployments, most agent failures are not reasoning failures—they are context failures. The business context layer is therefore the true center of gravity.

An open stack usually combines:

- Ingestion and integration via Airbyte, Meltano, or Apache NiFi.

- Event streaming with Kafka‑compatible systems such as Redpanda.

- Durable storage, typically an object store like MinIO for documents, PostgreSQL (often with pgvector) for structured data, and OpenSearch for hybrid search.

- Metadata, lineage, and governance through DataHub or OpenMetadata, complemented by dbt for transformations and KPI definitions.

Rather than exposing raw data, leading organizations package this layer as a Context Service. It provides agents with permission‑filtered access to semantic entities, consistent KPI definitions, and durable identifiers. This is what allows multiple agents to collaborate without drifting apart.

Layer 4 — Agent execution: reliability and safety

Once agents can act, execution becomes a first‑class concern.

Model serving and routing are commonly handled by vLLM or Text Generation Inference, with LiteLLM acting as a gateway to route requests across open and hosted models. This abstraction is critical for cost control and future portability.

For execution, Kubernetes provides a portable baseline, while Temporal and Ray enable durable and distributed workloads. When agents are allowed to run code or manipulate files, isolation is mandatory: gVisor or Kata Containers, combined with strict network egress controls, significantly reduce risk.

Observability should be end‑to‑end, relying on OpenTelemetry, Prometheus, Grafana, and centralized logging to ensure every action is traceable.

Layer 5 — Evaluation and optimization: from demos to dependability

Production‑grade agents require systematic evaluation. Open‑source tooling now makes this practical:

- Ragas for retrieval‑augmented generation quality.

- promptfoo or DeepEval for regression testing agent behaviors.

- Langfuse for tracing, feedback, and debugging.

- MLflow and DVC for experiment tracking and dataset versioning.

In mature setups, evaluation acts as a release gate, with offline regressions, online monitoring, and structured human feedback forming three complementary improvement loops.

Security, governance, and sovereignty by design

Security and governance are not optional add‑ons; they are architectural properties.

Open‑source stacks allow enterprises to implement verifiable controls using components such as Keycloak for identity and SSO, Open Policy Agent for authorization, and hardened secrets management solutions. Combined with comprehensive auditing and PII redaction, this approach satisfies both regulatory and operational requirements.

Beyond compliance, there is a broader issue of sovereignty. In today’s geopolitical environment—marked by increasing tension between the US, China, and a Europe seeking strategic autonomy—outsourcing the core of one’s AI decision‑making infrastructure to a single foreign vendor carries long‑term risk. Open architectures, deployable on European clouds or on‑premise, provide a credible path toward technological independence without sacrificing capability.

Why starting open is the more judicious choice

Several structural advantages consistently emerge when organizations begin with open components.

First, architectural portability preserves leverage. Agent systems rapidly become mission‑critical, and avoiding lock‑in keeps strategic options open as models, providers, and regulations evolve.

Second, governance becomes auditable rather than assumed. With open standards, controls can be inspected, tested, and adapted to regulatory change.

Third, cost economics are predictable. Routing tasks to the most appropriate models, optimizing retrieval, and tuning infrastructure often results in significantly lower total cost of ownership at scale.

Finally, integration and customization become platform capabilities rather than recurring projects. A single context service, standardized tools, and shared evaluation pipelines dramatically reduce agent sprawl and accelerate enterprise adoption.

Conclusion

OpenAI Frontier crystallizes a powerful vision of AI coworkers embedded in enterprise operations. Yet the vision itself does not mandate a proprietary path. At its core, an enterprise agent platform is an architecture: shared context, controlled execution, continuous evaluation, and strong governance, exposed through multiple interfaces.

By assembling these layers from open‑source components, organizations can match the functional ambition of proprietary platforms while retaining control over data, costs, compliance, and strategic autonomy. In a world where AI capabilities are becoming foundational infrastructure, this control is not a luxury—it is a prerequisite for long‑term resilience.

At Polynom, we consistently see that the most successful AI transformations are those built on open standards, clear semantics, and disciplined engineering. That is how AI coworkers move from promising pilots to dependable teammates—without turning the enterprise itself into a dependency.